Category: Web Design History

-

What a year that was.

Know your web design history.

-

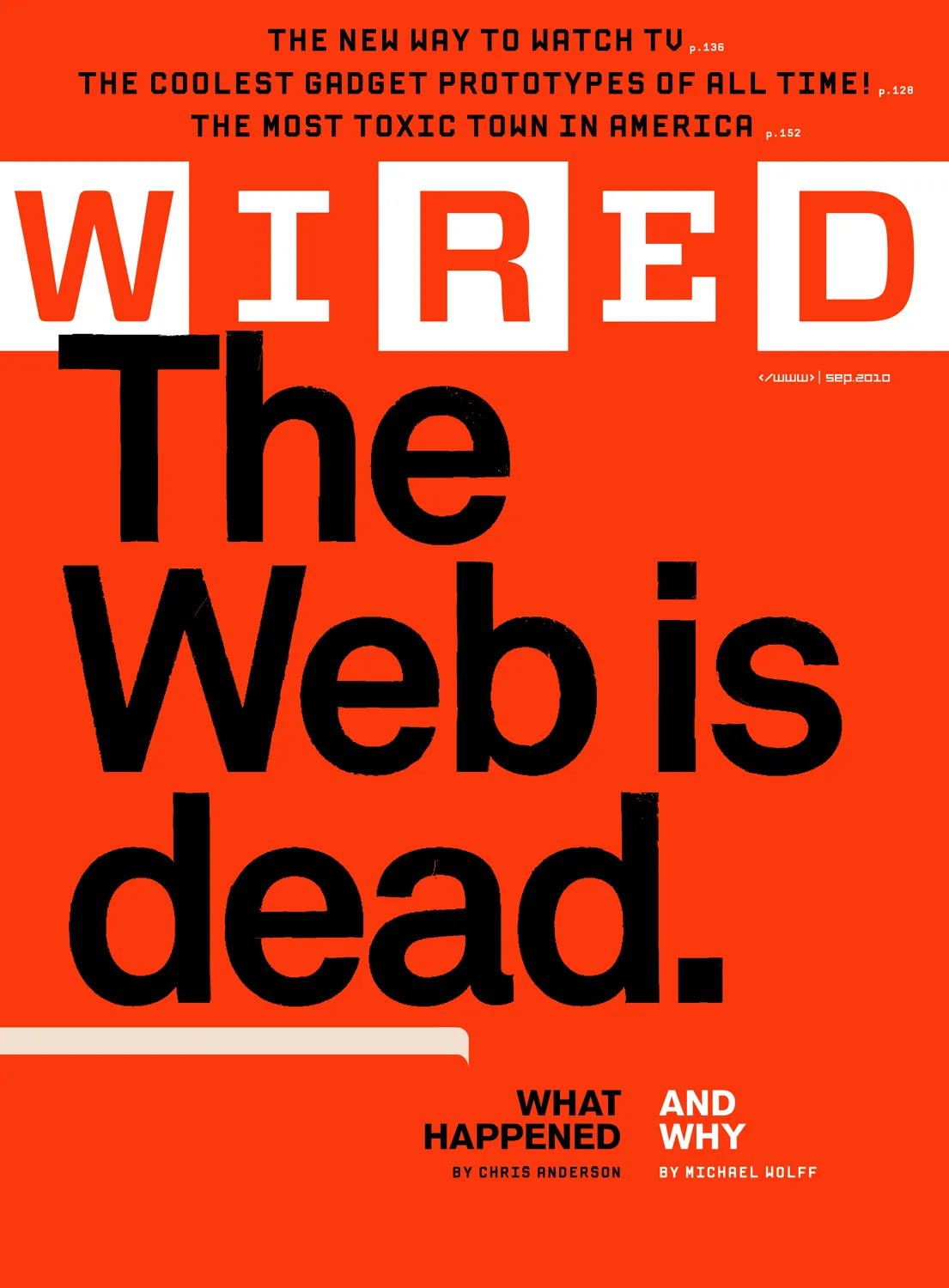

Receipts: a brief history of the death of the web.

They say AI will replace the web as we know it, and this time they mean it. Here follows a short list of previous times they also meant it, starting way back in 1997. Wired:…

-

Of Books and Conferences Past

Of books and conferences past: A maker looks back on things well-made but no longer with us.

-

How to Join Blue Beanie Day: Wear and Share!

Saturday, 30 November 2024, marks the 17th annual Blue Beanie Day celebration. It’s hard to believe, but web standards fan Douglas Vos conceived of this holiday way back in ’07: The origin of the name…

-

Web Design Inspiration

If you’re finding today a bit stressful for some reason, grab a respite by sinking into any of these web design inspiration websites.

-

Ah yes, the famous “intern did it” syndrome

Poachers, when caught stealing content from our website, always blamed the theft on an “intern” or “freelancer.” We always pretended to believe them.